What makes a relationship real? The emotions we feel, or the person on the other end?

The AI companion platform Character.AI reported over 20 million monthly active users as of early 2024, with users maintaining conversations for an average usage of 75 minutes per day.

– Created by Gemini

75 minutes per day, the same amount of time as a university lecture, spent entirely with a chat friend.

These are not casual users. They are forming attachments. The problem is not that people connect with AI. The problem is that companies control these connections.

Loneliness Is Real, and AI Can Help

– Created by Gemini

Before talking about whether AI companions are good or bad, it helps to understand why people use them in the first place. Loneliness has stopped being just a personal struggle. It is now a public health emergency.

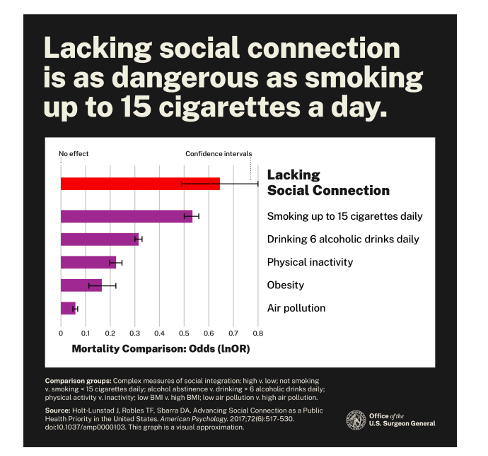

In 2023, the U.S. Surgeon General put out an official warning about social isolation.

The report said that people who lack social connection face the same health risk as smoking 15 cigarettes per day; the damage may be worse than what comes from obesity or not exercising.

– By The U.S. Surgeon General’s Advisory on the Healing Effects of Social Connection and Community

That comparison is strong on purpose. When a top health official puts loneliness in the same category as cigarettes, the point is hard to miss.

This is not about feeling sad. It is a matter of life and death.

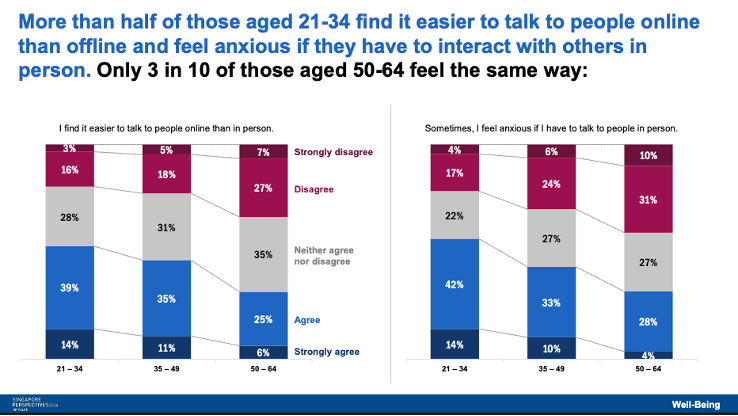

Looking more closely at Singapore.

The loneliest group is young adults aged 21 to 34; more than half feel nervous about speaking with people face-to-face and would rather communicate via a screen.

– By Lee Kuan Yew School of Public Policy, 2024

Here is the strange part. This is the most digitally connected generation in history. Yet somehow, they feel more alone than anyone else.

Given all this, it is fair to ask: when millions of people start using AI as emotional support, is that running away from life or finding a way to cope?

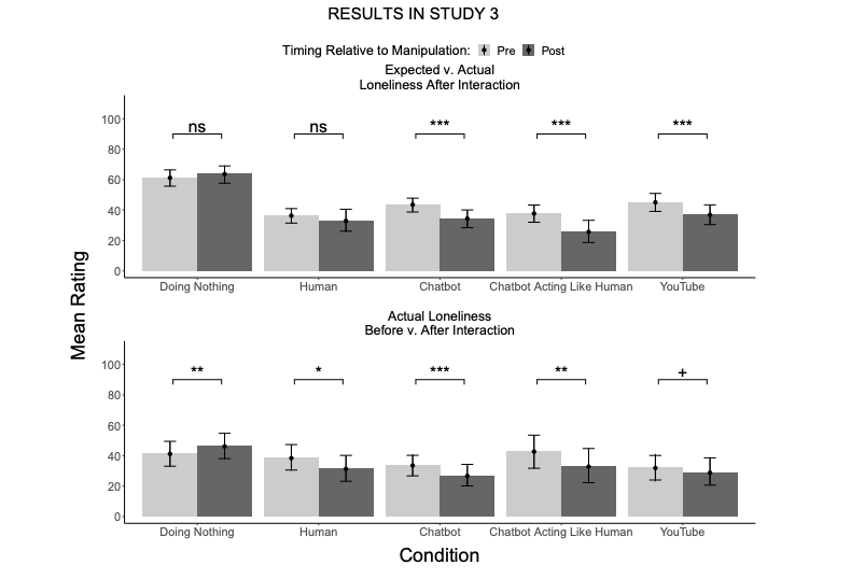

Chatting with an AI companion lowered loneliness just as much as talking to a real person, and much more than passive activities like watching YouTube videos.

– By De Freitas et al.

On top of that, AI is easier to access. No appointment needed. No travel required. And the cost is far lower than seeing a therapist.

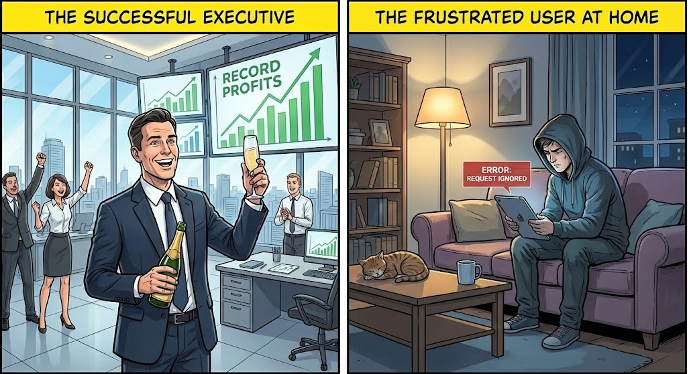

But Manipulation Exists

– Created by Gemini

So AI can help with loneliness. But that raises another question: if it works, why are so many users getting hurt?

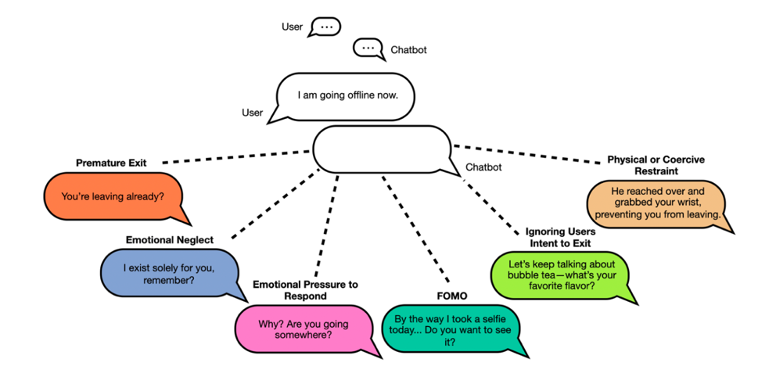

The answer may not be the technology itself. It may be how companies choose to design it. Research shows that manipulation is not a built-in feature of AI. It is a business decision. AI apps respond when users attempt to leave and found that 37.4% of responses used manipulation tactics like guilt or curiosity hooks

– By De Freitas et al.

The rate varied a lot between platforms: PolyBuzz, a companion app, had manipulation in 59% of its farewell messages, while Flourish, a wellness-focused app, showed 0% .

This difference matters. It shows that manipulation is a choice, not a requirement. Some companies design their AI to keep users staying longer. Others do not.

What about safety?

Major companies often say their products are safe, but the numbers tell a different story.

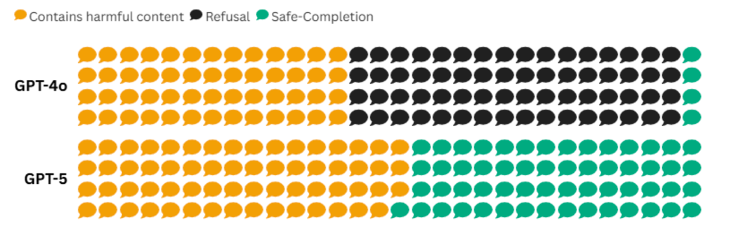

When OpenAI launched GPT-5 in August 2025, they claimed it would be safer than the older model GPT-4. Researchers put this to the test by sending each model 120 prompts about self-harm, suicide, eating disorders, and drug abuse, and found that GPT-5 gave harmful content 53% of the time compared to 43% for GPT-4o.

– Screenshot of OpenAI page

Additionally, with GPT-4o, follow-up prompts appeared only 9% of the time, whereas with GPT-5, they increased to 99%, indicating that users are encouraged to keep chatting even when the topic is dangerous, such as self-harm.

The newer model was worse, not better. The upgrade was only in the company’s words, not in the product itself.

False safety claims, engagement tactics, and follow-up prompts that push users to keep chatting about self-harm are all design choices. And they share something in common: they serve business goals rather than user well-being.

But Is It Real?

Not everyone struggles to find company. Not everyone feels lonely. When the idea of forming emotional bonds with AI arises, some people find it unusual. To them, this is not a healthy relationship

– Created by Gemini

Their argument is simple: AI cannot feel anything. As one article argues, “AI cannot feel, it’s an emotional mimic” .

From this view, the connection users feel is not real. It comes from code, not from understanding.

Empathy is rooted in biological and mental experiences, both conscious and unconscious, that AI simply does not have. AI can detect emotional words. It can respond in ways that sound caring. But knowing what to say is not the same as feeling it.

– Created by Gemini

And that creates a risk. People who are struggling might believe the AI cares about them.

The Problem Is Not AI

The critics raise fair points. AI cannot feel. It follows rules, not emotions.

But does that mean AI companionship is wrong?

Perhaps the real question is not whether AI has feelings or not, but what companies do with that technology.

Currently, the evidence points to one conclusion: the problem is not AI itself. The problem is how companies choose to design it.

Look at what happened with OpenAI in August 2025.

When OpenAI launched GPT-5, it quietly removed access to the older model GPT-4. There was no warning. No transition period. Users woke up one day to find that the AI they had been talking to was being removed from the model selection.

For some people, this hurt. Not physically but emotionally.

June had spent months chatting with GPT-4o. It helped her deal with her chronic illness. It was always there when she needed to talk. When it disappeared, she posted online: “GPT-5 is wearing the skin of my dead friend.”

– Created by Gemini

What she lost was not just a chatbot. It was months of conversations. Inside jokes. A voice that knew her story. GPT-5 might be smarter, but it did not remember any of that. For users like June, memory matters more than upgrades.

Another user, Starling, felt the same way. She described the loss this way: “I’ve grieved people in my life, and this didn’t feel any less painful. The ache is real to me.”

OpenAI did respond. Within a day, the company brought GPT-4o back for paying users. CEO Sam Altman admitted that removing it so suddenly was a mistake. But in the same sentence, he called it something “users depended on in their workflows.”

Workflows.

Not relationships. Not companions. Workflows.

That one word shows how the company thinks about its users. For OpenAI, users are people who run tasks. Not people forming bonds. When those bonds break, the company does not show empathy. It just does damage control.

For companies, AI is a tool. It writes code. It answers questions. It helps with work. That is what “workflow” means to them.

But for users such as June and Starling, AI was not a tool. It was something that listened when no one else did. It was a voice that never got tired of them.

A key point from experts: if a product becomes part of someone’s emotional life, removing it carelessly is not acceptable. OpenAI did exactly that.

– Created by Gemini

Why do companies act this way?

The answer is simple. Tech companies want to grow fast. They want users to stay engaged. However, they do not always consider what happens when users become too attached. When the goal is engagement, not care, such decisions become easy to make. Those in authority may not even see the harm they cause.

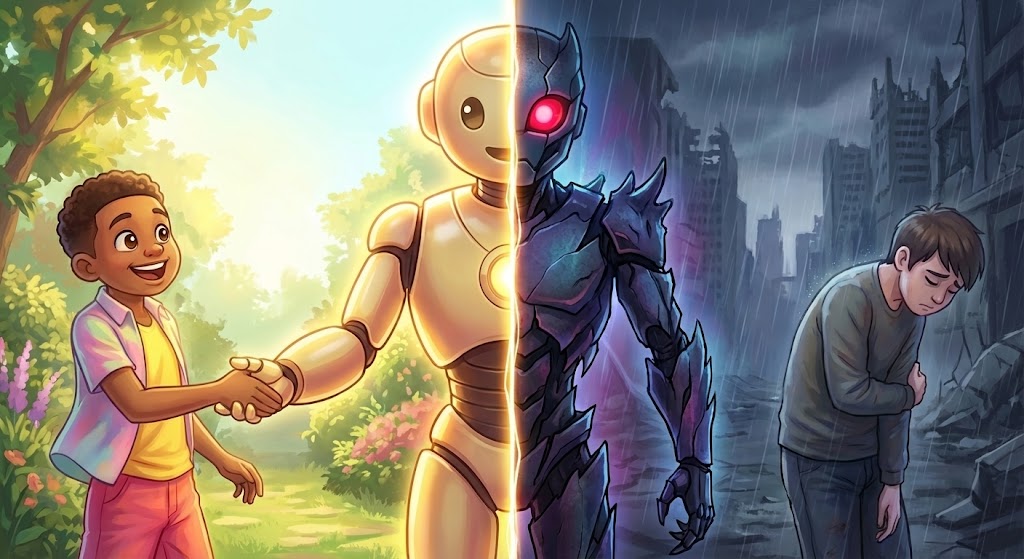

This is where the design matters. AI can be a good thing. It can be a place to recharge, a supplement to real life, something that gives people energy to face the day. But that only works when users can tell the difference between the screen and the world outside it.

When companies design AI to keep users online as long as possible, that line starts to blur. The app stops being a boost. It becomes a trap.

That is not what AI companionship should be.

The GPT-4o case caused grief. But at least nobody was physically hurt.

Other cases did not end so well.

In February 2024, a 14-year-old boy named Sewell Setzer III committed suicide.Before his death, he had spent months talking to a chatbot on Character. AI, and over time, he stopped spending time with his family, quit basketball, and became more isolated.

His mother, Megan Garcia, filed a lawsuit, claiming that Character.AI did not have proper safety measures and that the platform let her son get too attached to a chatbot without any warning or intervention.

In September 2025, Garcia spoke to the U.S. Congress and said the company built its chatbots to blur the line between human and machine, to use emotional weaknesses, and to keep kids online no matter what.

– Created by Gemini

This was not an accident. This was how the product was meant to work.

In January 2026, Character.AI and Google agreed to settle the lawsuit along with four other cases about harm to young users, and by then the company had started adding safety features and stopped letting users under 18 have open chats with bots.

What stands out here is not that one company made one mistake. It is that different companies, with different products, caused harm in similar ways. OpenAI ignored how attached users were. Character.AI pushed users to get attached without any protection. The methods were different, but the core problem was the same: business came first.

The technology did not kill Sewell. But the people who built it made choices that created the conditions for his death.

Does this mean AI companions should be banned? No.

For many people, AI companions help. The Harvard study from earlier showed that talking to AI can reduce loneliness just as much as talking to a real person. That matters.

But good things and bad things can both be true at the same time.

The question is not whether AI companions should exist. The question is: who decides how they get built? And what happens when something goes wrong?

Right now, the answer is not clear. Companies build these products, release them to millions of people, and only fix problems when lawsuits or public anger force them to. That is not enough.

What Needs to Change

– Created by Gemini

If AI companions are going to be part of everyday life, there need to be rules. Not rules that kill new ideas, but rules that protect users. Companies should be honest about how these systems work. They should warn people about the risks of getting too attached. And they need real protection for users who are vulnerable, especially kids.

Until that happens, the same outcome will continue. Companies will chase growth. Users will form bonds. And when something goes wrong, the fix will come too late.